March 25, 2026

Secure Intra-Cluster Networking in Kubernetes Through WireGuard

Chintan Viradiya

Author

Shyam Kapdi

Contributor

Rakshit Menpara

Reviewer

Implementing secure intra-cluster networking is no longer an optional “add-on” but a prerequisite for compliance frameworks like PCI-DSS 4.0 and SOC2. Kubernetes networking was architected for high-velocity reachability, not for enforcing cryptographic identity between internal components. In the default configuration, “East-West” traffic is implicitly trusted. This model creates a significant Security Debt as clusters scale in workload density and multi-tenant complexity.

Logical isolation (Namespaces, RBAC) does not translate to cryptographic isolation. Built-in controls like NetworkPolicies govern reachability, but they do nothing to protect packets from inspection or tampering once the network boundary is breached.

- The Sniffing Risk: Without link-layer encryption, a compromised worker node allows an attacker to observe all traffic passing through that node’s network interface.

- The Infrastructure Gap: While mTLS can protect service-to-service calls, it leaves critical system-level communication (kubelet, CNI, kube-proxy) exposed, creating a blind spot for auditors and platform teams alike.

Architectural Trade-offs: Platform-Level Security

However, the solution must not become the bottleneck. This brief examines the performance and operational trade-offs of modern encryption substrates, focusing on how to achieve wire-speed privacy without the resource-heavy “Sidecar Tax” of legacy service meshes.

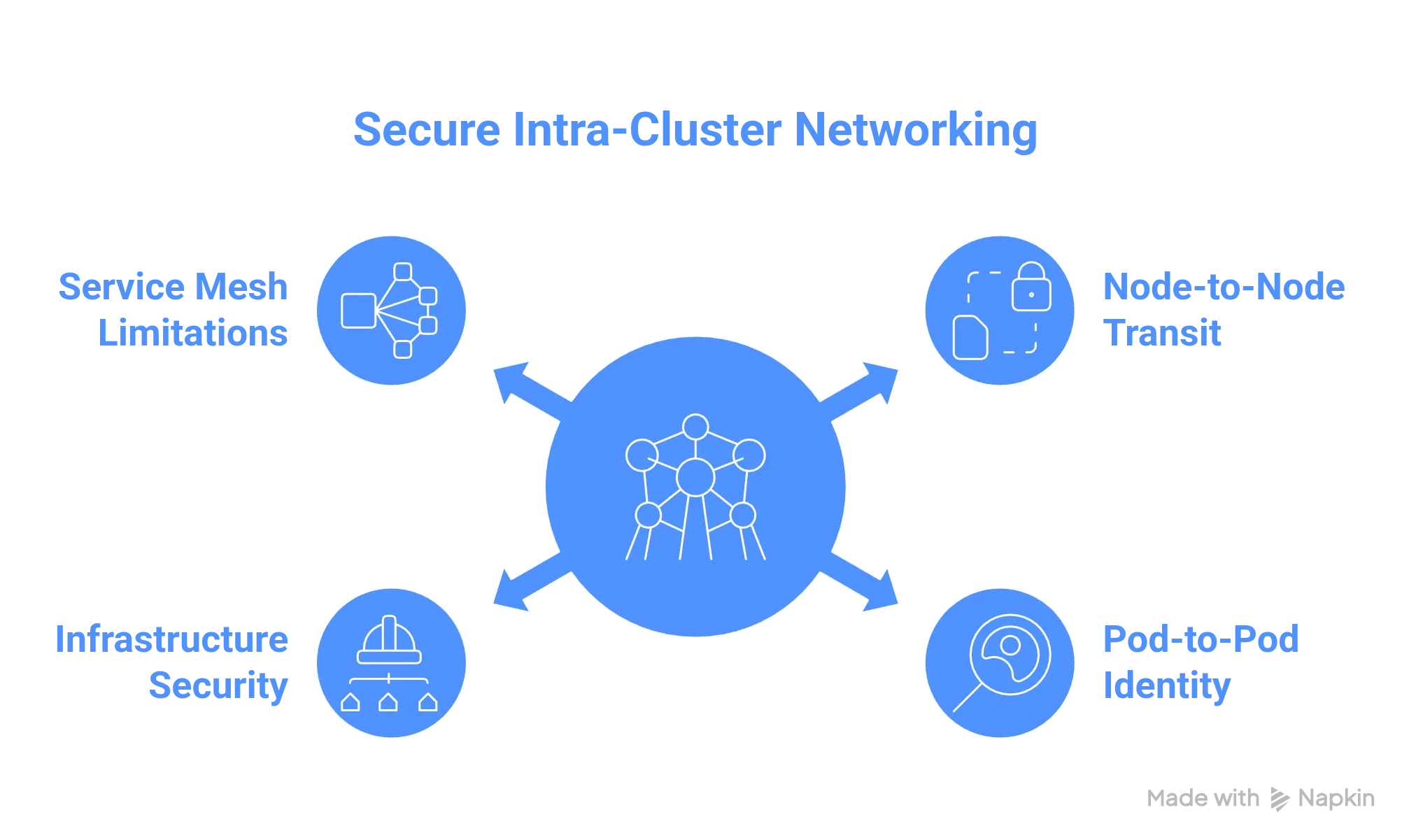

What Is Secure Intra-Cluster Networking in Kubernetes?

Secure intra-cluster networking is the implementation of a transparent, authenticated cryptographic layer across the entire Kubernetes fabric. Unlike standard CNI configurations that prioritize L3 reachability, a hardened data plane ensures that confidentiality and integrity are enforced at the kernel level, neutralizing lateral movement before it reaches the application layer.

The Infrastructure Gap: Beyond Network Policies

While Kubernetes NetworkPolicies provide essential L4 stateful filtering, they do not provide encryption. In a multi-tenant or hybrid-cloud environment, traffic between nodes - including sensitive system-critical flows between the kubelet and the Control Plane - is often transmitted in plaintext.

True intra-cluster security must address:

- Node-to-Node Transit: Encapsulating all inter-node traffic (including CSI storage traffic and node-local agents) within encrypted tunnels.

- Pod-to-Pod Identity: Leveraging eBPF to verify workload identity at the source, ensuring that even if a node is compromised, the cryptographic identity of the pod remains intact.

Solving the “Sidecar Tax”: Why L7 Meshes are Insufficient

Many organizations attempt to solve encryption using service meshes (Istio, Linkerd). While excellent for Layer 7 authorization, they introduce significant architectural debt for infrastructure-level security:

- The Observability Gap: Meshes cannot secure system daemons (CNI agents, NodeLocal DNS) that run outside the sidecar proxy.

- Resource Contention: Sidecars consume a significant amount of CPU/RAM at scale, often resulting in 15-20% overhead in high-throughput environments.

- Latency Jitter: User-space proxying adds millisecond-level delays, impacting $P99$ latency for real-time applications.

By moving encryption into the kernel (via WireGuard or IPsec), we provide a cryptographic floor that delivers near-line-rate performance while removing the operational complexity of sidecar certificate management.

What Is WireGuard? : Scaling WireGuard for Enterprise Infrastructure

WireGuard is a high-performance, kernel-resident communication protocol that rethinks secure networking for the modern cloud era. Its value to the enterprise lies in its Operational Excellence (OpEx): replacing the fragmented, 30-year-old IPsec stack with a streamlined, “opinionated” data plane designed for high-velocity platform teams.

The Architectural Advantage: Why Auditability is Strategic

While legacy protocols like OpenVPN and IPsec suffer from “cipher suite sprawl,” WireGuard utilizes the Noise Protocol Framework to enforce a fixed set of modern primitives.

- Minimal Attack Surface: With the kernel module comprising roughly 4,000 lines, WireGuard is human-readable and formally verifiable - critical for SOC2/FIPS-regulated environments.

- Kernel-Space Efficiency: By operating within the Linux kernel, WireGuard avoids the “context-switching” overhead inherent in user-space VPNs, delivering near-wire-speed performance on modern 100Gbps NICs.

- Stealth and Resilience: Utilizing a 1-RTT (one round-trip time) handshake based on Curve25519, WireGuard is silent to unauthorized scanners, effectively neutralizing “reconnaissance-in-force” attacks.

Enterprise Readiness: Solving the Control Plane Gap

WireGuard’s simplicity is its strength at the data plane, but it creates a “Management Gap” at scale. In an enterprise environment, raw public/private key pairs are not enough.

True enterprise readiness requires:

- Automated Key Lifecycle Management: Rotating thousands of keys across multi-cloud regions without downtime.

- Identity-Aware Routing: Integrating with OIDC/SAML (Okta, Azure AD) to map cryptographic keys to specific user and workload identities.

- Advanced NAT Traversal: Implementing STUN/ICE and relay fallback to ensure connectivity across restrictive corporate firewalls and dynamic IP environments.

Hardening the K8s Data Plane: Mitigating Lateral Movement and Data Exfiltration

In a standard Kubernetes deployment, the network is built for high-velocity reachability, not cryptographic isolation. This “flat” model assumes that the VPC perimeter is impenetrable. However, once a single container or node is compromised, the lack of intra-cluster encryption transforms a localized breach into a cluster-wide catastrophe.

The Vulnerability of the “Inside”: Blast Radius Expansion

Without transparent encryption at the data plane, your infrastructure is susceptible to:

- Node-Level Packet Interception: If a worker node is compromised, an attacker can utilize eBPF or standard kernel utilities to sniff all “East-West” traffic, including internal API keys and PII, as it traverses the node’s network interface.

- Credential Exfiltration via IMDS: Unencrypted traffic often reveals Service Account tokens and metadata service (IMDSv2) requests, allowing attackers to escalate privileges from a single pod to the entire cloud account.

- Silent Data Exfiltration: Without a cryptographic barrier, massive data transfers - such as database dumps - can be camouflaged within legitimate internal traffic, bypassing standard anomaly detection.

Compliance Gaps: Moving Beyond “Network Trust”

The regulatory landscape (PCI DSS 4.0, HIPAA, GDPR) has shifted. The traditional defense of “our cloud provider isolates the VPC” is no longer sufficient under the Shared Responsibility Model.

- The Auditor’s Shift: Regulators now demand technical controls that ensure “Zero Trust” at the workload level. Relying on plaintext intra-cluster transit is increasingly viewed as an avoidable risk.

- Forensic Integrity: Secure networking provides the “Cryptographic Proof” required during incident response, guaranteeing that analyzed packets were not tampered with during transit.

The Operational Performance Tax

Many organizations attempt to solve these issues at the application layer using mTLS via Service Meshes. However, this introduces a significant “Sidecar Tax” - often consuming 15-20% of cluster CPU and increasing $P99$ latency. By implementing encryption at the kernel level (eBPF/WireGuard), you provide a consistent security posture across AWS, Azure, and on-prem with minimal overhead and zero application-layer refactoring.

High-Throughput K8s Data Plane Security: Scaling WireGuard vs. mTLS

As Kubernetes clusters scale to support high-performance microservices, the “Sidecar Tax” of traditional mTLS becomes a primary architectural bottleneck. Our implementation of WireGuard at the data plane layer provides a transparent, protocol-agnostic cryptographic foundation that operates at the kernel level, delivering wire-speed performance without application-level refactoring.

Deterministic Performance: Kernel-Space vs. User-Space Overlays

In traditional Service Mesh architectures, every packet must traverse a user-space proxy (Sidecar). This introduces significant jitter and CPU contention.

- Line-Rate Throughput: On $10Gbps$ links, our WireGuard-enhanced data plane delivers $9.2–9.5Gbps$ of throughput by leveraging the Linux kernel’s high-efficiency networking stack.

- Latency Jitter Minimization: By eliminating the user-space hop, the added latency per peer-to-peer connection is typically $<0.1ms$, preserving sub-millisecond $P99$ tail latency for real-time Kafka or gRPC streams.

- Resource Optimization: Unlike IPsec, which demands AES-NI hardware for efficiency, WireGuard’s ChaCha20-Poly1305 primitives are optimized for software execution, reducing security-related CPU overhead by up to $40\%$ compared to Envoy-based mTLS.

Cryptographic Identity: Neutralizing the IP-Spoofing Vector

In standard CNI networking, identity is often tied to ephemeral IP addresses - a model that is trivial to bypass on a compromised node.

- Cryptographic Routing: We replace IP-based trust with public-key identity. Every node and pod is a verified “peer” in the cryptographic fabric. The network refuses to route or decrypt a single packet unless the sender’s key is authenticated at the transport layer.

- Zero-Downtime Key Orchestration: Our control plane automates key rotation and distribution across high-churn environments, ensuring that identity is as ephemeral as the containers it protects, without the manual overhead of traditional PKI.

Architectural “Defense in Depth”

WireGuard doesn’t replace your existing security; it provides the cryptographic foundation on which it sits.

- NetworkPolicy: Decides who is allowed to talk (L4 stateful filtering).

- WireGuard: Ensures that no one else can listen or tamper (L3/L4 integrity and confidentiality).

- Automated MTU Management: We solve the “encapsulation tax” by automating MSS/MTU clamping at the interface level, preventing the packet fragmentation that plagues standard AWS/GCP VPC deployments.

Solving the MTU Tax: Deterministic Performance in Encrypted Overlays

MTU (Maximum Transmission Unit) misconfiguration is the single most common cause of “silent” performance degradation in encrypted Kubernetes clusters. When encryption headers are added to a packet without adjusting the payload size, the resulting “oversized” packets are either fragmented by the kernel or silently dropped by cloud fabric security groups.

The Fragmentation Cliff: Why “Green” Dashboards Lie

MTU issues are notoriously difficult to detect because they affect packets disproportionately:

- The “Small Packet” Mirage: DNS queries and health checks pass through the tunnel successfully, keeping monitoring dashboards “green” while large-payload API responses or database dumps fail.

- TCP Retransmission Storms: Dropped packets trigger aggressive TCP retransmissions, leading to $P99$ tail latency spikes that are often misdiagnosed as “noisy neighbors” or application-level bugs.

- PMTUD Black Holes: Most cloud environments block the ICMP “Fragmentation Needed” messages required for Path MTU Discovery, causing connections to hang indefinitely when they hit an MTU limit.

Deterministic Fixes: From Manual Mangling to Orchestrated Alignment

Stable encryption requires a single, consistent truth across the CNI, the cryptographic tunnel, and the underlying VPC fabric.

1. Automated MTU Alignment:

Instead of manual CNI configuration, our platform automatically calculates the effective payload capacity ($Link MTU - Overhead$) and propagates these settings across the cluster. For a standard $1500$-byte link, we enforce a $1440$-byte limit to account for the $60$-byte WireGuard encapsulation.

2. eBPF-Driven MSS Clamping:

We move beyond legacy iptables mangling. Our control plane injects eBPF programs at the pod interface to dynamically rewrite the Maximum Segment Size (MSS) during the TCP handshake. This ensures that the conversation “caps” itself at a safe size before a single data packet is sent.

3. Infrastructure Observability:

We provide real-time visibility into fragmentation events and ICMP black holes. Our “Fragmentation Heatmap” allows platform teams to identify mismatched nodes across hybrid-cloud or multi-region boundaries instantly.

Implementing WireGuard in Kubernetes

1. Architectural Prerequisites

Before deployment, ensure your environment meets these technical baseline requirements:

- Kernel Support: Nodes must run Linux kernel 5.6+ (where WireGuard is native) or have the wireguard module installed.

- CNI Compatibility: This guide assumes a standard CNI (like Flannel or standard bridge). Note that some CNIs like Cilium and Calico have native WireGuard integration that can be enabled via simple configuration flags.

- Network Access: UDP port 51820 (default) must be open between all nodes in your Security Groups/Firewalls.

2. Strategy: The “Node-to-Node” VPN Tunnel

The most efficient way to achieve this without a native CNI feature is via a DaemonSet that manages WireGuard interfaces on each node.

Step A: Generate Key Pairs

WireGuard relies on public-key cryptography. Each node needs a unique private/public key pair. For a scalable production environment, these should be stored as Kubernetes Secrets.

# Generate keys locally for testing

wg genkey | tee privatekey | wg pubkey > publickeyStep B: Configure the WireGuard Interface

On each node, a virtual interface (usually wg0) is created. The technical configuration requires:

- Local Address: A unique IP within a dedicated “Tunnel Subnet” (e.g., 10.0.0.x/24).

- Peers: A list of all other nodes’ public keys and their physical internal IP addresses.

3. Implementation via Kilo (Recommended Tooling)

Manually managing WireGuard keys for 50 nodes is operationally impossible. Kilo is an open-source mesh VPN built specifically for Kubernetes that automates this logic.

1. Install the Kilo Custom Resource Definitions (CRDs)

Kilo uses CRDs to manage “Nodes” and “Peers.”

kubectl apply -f https://raw.githubusercontent.com/squat/kilo/main/manifests/kilo-k3s.yaml(Note: Choose the manifest corresponding to your distribution, e.g., k3s, kubeadm, or RKE).

2. Annotate Nodes

Kilo needs to know which internal IP to use for the WireGuard endpoint. Replace <internal-ip> with your node’s actual private IP.

kubectl annotate node <node-name> kilo.squat.ai/location="cluster"

kubectl annotate node <node-name> kilo.squat.ai/force-endpoint="<internal-ip>:51820"3. Verify the Mesh

Once the DaemonSet is running, check the logs of the Kilo pods. You should see the handshake confirmations. You can verify the interface on any node:

# Run on the host node

ip addr show wg0

wg show4. Technical Nuances & Performance Tuning

To ensure 100% technical correctness, you must account for the MTU (Maximum Transmission Unit).

Because WireGuard encapsulates packets, it adds a 60-byte overhead (IPv4) or 80-byte (IPv6). If your standard Ethernet MTU is 1500, you must set your WireGuard and CNI MTU to 1420 or lower. Failure to do this will result in packet fragmentation and severe application latency (especially with TLS handshakes).

| Feature | Technical Specification |

|---|---|

| Protocol | ChaCha20-Poly1305 (Authenticated Encryption) |

| Port | 51820 / UDP |

| MTU Recommendation | 1420 (for 1500 physical MTU) |

| Routing Table | Table 51820 (Standard for Kilo/WireGuard) |

5. Validation of Encryption

To prove that the intra-cluster traffic is actually encrypted, perform a packet capture on the physical interface (e.g., eth0) of a worker node while pinging a pod on a different node.

# On Node A

tcpdump -i eth0 udp port 51820If configured correctly, you will see encrypted UDP packets between node IPs, but you will not see the raw HTTP/GRPC traffic of your applications.

Architectural Decision Record: Evaluating WireGuard for Enterprise K8s

Deciding between a kernel-level encryption substrate (WireGuard) and an application-layer service mesh (mTLS) is a trade-off between Data Plane Performance and Control Plane Granularity.

When WireGuard is the Strategic Requirement

WireGuard should be the foundational choice for organizations prioritizing infrastructure-level integrity and deterministic performance:

- Compliance without Refactoring: Map directly to PCI DSS 4.0 (4.2.1) and SOC2 Type II requirements for data-in-transit. WireGuard provides a cryptographic floor that protects every protocol (HTTP, gRPC, DB-native) without touching application code.

- High-Throughput / Low-Latency Workloads: Ideal for Kafka clusters, database replication, and high-frequency microservices where the $15\%+$ “Sidecar Tax” of mTLS is cost-prohibitive.

- Blast Radius Containment: In multi-tenant environments, WireGuard ensures that node-level compromises cannot escalate to cross-tenant packet sniffing.

- Unified Security Substrate: Enables “Secure-by-Default” networking, treating encryption as a transparent infrastructure utility rather than a per-app configuration.

Managing the L7 Transition: Addressing WireGuard’s Limits

While WireGuard secures the “pipe,” it does not natively understand application-level logic. Enterprise implementations must address these gaps through a managed control plane:

1. The Key Orchestration Challenge:

Raw WireGuard requires manual public-key distribution. In high-churn clusters, this is an operational failure point. Our Platform automates the entire key lifecycle - distribution, rotation, and revocation - ensuring peer identity is as ephemeral as your pods.

2. Bridging L3 to L7:

WireGuard operates at L3/L4. If your security policy requires URL-path filtering or gRPC method-level authorization, WireGuard must be paired with an eBPF-driven observability layer. This allows you to retain L7 Granularity (canary deployments, circuit breaking) while offloading the heavy cryptographic lifting to the kernel.

3. Hybrid-Cloud Connectivity:

Unlike standard CNIs, our implementation facilitates secure tunnels between K8s pods and legacy VM workloads, providing a consistent Zero-Trust Network Access (ZTNA) posture across your entire hybrid estate.

Conclusion

Kubernetes’s decision to leave transport security to the network implementation was a pragmatic one, enabling the ecosystem to innovate. Today, that innovation has converged on a clear best practice: intra-cluster encryption should be the default, not the exception.

WireGuard stands out as the premier tool for this mission. It closes a foundational security gap by blending modern, “opinionated” cryptography with high-performance execution and radical operational simplicity. It doesn’t just add a feature; it provides the essential cryptographic floor upon which every other part of your cluster safely sits.

Looking forward, the industry trend is unmistakable. Modern CNIs, like Cilium and Calico, are making WireGuard integration a “one-line” enablement. This isn’t just a security upgrade; it’s an operational relief. It removes an entire category of risk and compliance headaches from the platform team’s plate, allowing you to focus on scaling rather than worrying about internal packet sniffing.

For the senior architect evaluating this today, the question is no longer if you should encrypt East-West traffic, but how soon you can flip the switch. WireGuard offers a path that is both technically superior and operationally sane. It allows you to build clusters that aren’t just connected - they are truly secure, from the inside out.

Frequently Asked Question

Get quick answers to common queries. Explore our FAQs for helpful insights and solutions.

Secure intra-cluster networking is the practice of encrypting and authenticating all east–west traffic inside a Kubernetes cluster, including pod-to-pod and node-to-node communication. It protects internal traffic from snooping, tampering, and lateral movement - even if a node is compromised.

Application-layer TLS only protects traffic that is explicitly designed to use it. System-level traffic - such as kubelet communication, CNI overlays, kube-proxy traffic, and infrastructure services - often bypasses TLS, leaving large portions of the cluster unencrypted.

WireGuard encrypts traffic at Layer 3 (network layer), making it protocol-agnostic. It transparently encrypts all node-to-node and pod-to-pod traffic using cryptographic identities based on public keys, without requiring application changes or sidecars.

MTU issues usually appear as intermittent packet loss, increased tail latency, stalled large payloads, and degraded throughput - often without obvious CPU or memory pressure. These issues are frequently misdiagnosed as application bugs or cloud networking problems.

WireGuard should not be used alone when you require Layer 7 authorization, per-request identity, traffic shaping, or advanced observability. In such cases, it should be combined with a service mesh or application-layer security controls

February 12, 2026

DevSecOps Architecture Explained: Security as Code, Policy Automation, and GitOps

Chintan Viradiya

Author

January 22, 2026

FluxCD with Helm: Scaling Platform Governance via GitOps Reconciliation

Chintan Viradiya

Author

January 19, 2026

Site Reliability Engineering in Practice: Building Reliable Systems at Scale

Annavar Satish

Author

Optimize Your Cloud. Cut Costs. Accelerate Performance.

Struggling with slow deployments and rising cloud costs?

Our tailored platform engineering solutions enhance efficiency, boost speed, and reduce expenses.